Ofcom lowers the bar for online video censorship

UK telecoms regulator is adapting quickly to its new role as chief digital censor and wants there to be less horridness allowed on video sharing platforms.

March 24, 2021

UK telecoms regulator is adapting quickly to its new role as chief digital censor and wants there to be less horridness allowed on video sharing platforms.

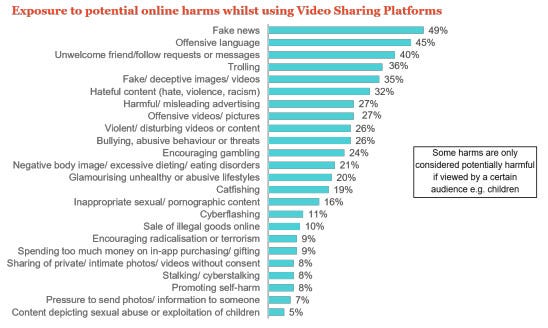

‘One in three video-sharing users find hate speech’, heads the Ofcom press release, citing a recent study it did, which is based on a survey of UK users of video sharing platforms (VSPs) conducted in September and October of last year. Even the full findings document doesn’t seem to reveal the sample size, however.

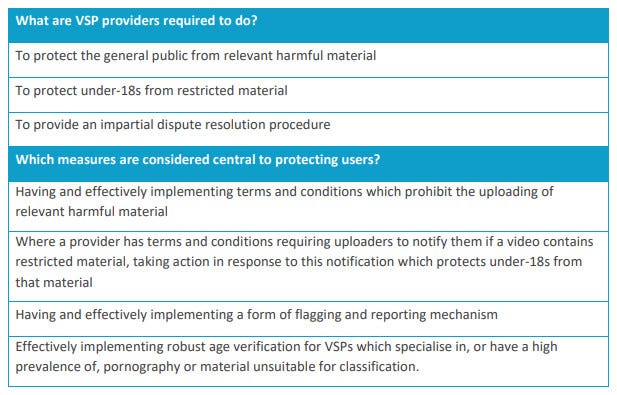

The purpose of the research seems to have been to support Ofcom’s proposed new guidance for protecting VSP users from harm. Here’s the table summarising those recommendations.

As you can see, the word ‘relevant’ is doing a lot of work here, so what does it mean in this context?

The only definition of ‘relevant harmful material’ we get is: incitement to violence or hatred against particular groups; and content which would be considered a criminal offence under laws relating to terrorism; child sexual abuse material; and racism and xenophobia.

The first stipulation is intriguing. As the latter statement highlights, there are already laws relating to racism and xenophobia, so what is this ‘hatred against particular groups’ Ofcom thinks requires an extra layer of censorship? Here’s what Ofcom says in the footnotes of its press release: ‘Figures on hateful content are based on the combined data for exposure to videos or content encouraging hate towards others videos or content encouraging violence towards others, and videos or content encouraging racism.’

So some of those 32% of respondents may have merely seen a video in which hate was somehow encouraged. The criteria for determining such an event are not revealed. As if that wasn’t broad and vague enough Ofcom tells us a whopping 70% of respondents said they have been exposed to a potentially harmful experience in the last three months. Here’s the full list of those experiences and what proportion of users suffered them.

Here’s what Ofcom reckons VSP owners should do about all this digital hate and harm:

Clear rules around uploading content. VSPs should have clear, visible terms and conditions which prohibit users from uploading the types of harmful content set out in law. These should be enforced effectively.

Easy flagging and complaints for users. Companies should implement tools that allow users to quickly and effectively report or flag harmful videos, signpost how quickly they will respond, and be open about any action taken. Providers should offer a route for users to formally raise issues or concerns with the platform, and to challenge decisions through dispute resolution. This is vital to protect the rights and interests of users who upload and share content.

Restricting access to adult sites. VSPs with a high prevalence of pornographic material should put in place effective age-verification systems to restrict under-18s’ access to these sites and apps.

The first bullet merely stresses that illegal content shouldn’t be allowed, which seems somewhat redundant. The second mandates an effective complaints and dispute resolution capacity, which, while laudable, is presumably already in place with all major VSPs. The third says children should be better protected from accessing porn, which once more is hard to argue with and is presumably directed at porn-specific sites.

It’s hard to know what Ofcom is trying to achieve with this. Most of its proposed measures seem to duplicate existing law or best practice, so in that respect this just seems to be an attempt to be seen to be steering a process that is already well underway. Alternatively, introducing such broad definitions of hate and harm could be designed to prepare the ground for a more actively censorious approach from Ofcom in future.

About the Author

You May Also Like

_1.jpg?width=300&auto=webp&quality=80&disable=upscale)